Polling firms released more election polls in the 2011 provincial general election than in any recent provincial election.

Corporate Research Associates, Environics, MQO (MarketQuest Omnifacts) and Telelink produced a total of five polls. MQO issued two polls 10 days apart. Telelink and CRA produced polls for NTV and the Telegram, respectively and Environics issued a single poll.

The Polls and What They Reported

News stories reported what the polling organizations reported to them.

MQO issued its first poll on September 20, one day after the campaign formally started.

They reported the responses to questions on party choice (“if an election were held today”), leader choice, government satisfaction and top issue. The news release gave the results for party choice and leader choice as percentage of decideds, but with the percentage undecided - described as “noncommittal” in the leader choice paragraph – included.

The poll was based on a sample of 413 Newfoundlanders and Labradorians, with a margin of error of +/- 4.9%. It was conducted between Friday, September 16 and Sunday September 18, 2011.

Aside from the news release, MQO apparently did not release anything else about the poll including information on how the sample was chosen, any weighting, if the poll was done alone or as part of a larger poll or details of question wording and order.

MQO issued its second poll release on September 30.

The release reported on questions on party choice, leaders’ debate, government satisfaction and major issue. As with the earlier release, the results for the party choice and leader questions gave results as a percentage of decided respondents and included the percentage undecided.

The poll was based on a sample of 464 residents of Newfoundland and Labrador, with a margin of error of +/- 4.6 per cent. The research was conducted via phone and online between Wednesday, September 28 and Friday, September 30, 2011, using MQO’s research panel iView Atlantic. The sample was weighted regionally to ensure proper representation of the province as a whole.

The release did not include information on the research panel or how MQO combined the panel sample with the telephone. The release only indicated the sample was weighted regionally but gave no indication of what standard was used to determine the appropriate weighting.

The release did not give any information on question wording, order or other information related to how the poll was conducted. The two releases do not indicate if MQO conducted both polls using the same methodology such that the results could be compared.

This release included two graphics showing some of the responses for the leader choice and party choice questions.

On October 3, NTV reported a poll it commissioned from Telelink. NTV reported on party choice and leader choice. Telelink conducted the poll on October 1 and 2, with a sample comprising 511 residents of the province. Reported margin of error was plus or minus 4.3 percentage points.

NTV reported the results for the party choice question (how the respondent intended to vote) as a percentage of all respondents, including don’t know, no answer.

Telelink probed the undecided/refused and produced a second set of party choice numbers combining decided plus leaning.

On October 4, NTV reported on a question on Muskrat Falls following up on an earlier Telelink poll it had commissioned in February.

Environics issued a poll result on October 5 through Canadian Press.

Thirty-eight per cent of respondents backed the incumbent Progressive Conservatives, compared to 23 per cent for the NDP and nine per cent for the Liberals.

Thirty per cent were undecided.

The online poll was conducted by Environics Research Group and provided exclusively to The Canadian Press.

Unlike traditional telephone polling, in which respondents are randomly selected, the Environics survey was conducted online from Sept. 29 to Oct. 4 among 708 people.

The respondents were chosen from a larger pool of people who were recruited and compensated for participating.

The non-random nature of online polling makes it impossible to determine the statistical accuracy of how the poll reflects the opinions of the general population.

Neither Environics or Canadian Press released any other information on the poll.

The Telegram commissioned Corporate Research Associates to conduct a poll exclusively for the newspaper.

The Telegram will roll out results of the wide-ranging poll — which has a large sample size of 800 — in the coming days.

The poll was conducted between Sept. 29 and Oct. 3 and has a margin of error of plus or minus 3.5 percentage points with a confidence level of 95 per cent.

The Telegram reported results of the poll from October 6 to October 8 for questions on major issue, party choice, second choice, leader choice and government satisfaction.

The October 6 edition reported the party choice question as percentages of all respondents. The Telegram reported on regional results for some questions but did not indicate separate margins of error for the regional results. CRA poll reports obtained from the provincial government under access to information laws typically do not show margins of error for these sub-sample breakouts.

The Telegram did not release any other details on the poll including specific wording of questions, sequencing or weighting.

What the Party Choice Question Measured

The party choice question is the one question asked by pollsters for which a genuinely objective confirmation exists.

The problem comes, however, in determining what the pollsters intended to measure when they posed the party choice question. There is what may be called a standard question – “if an election were held tomorrow…” however at least one of the polls involved a non-standard question along the lines of “who will you vote for next week?”

Some of the polls apparently asked non-standard questions

None of the news releases or news stories indicated what the poll results for the party choice question would show. That is, one can read the releases or news stories and not see clearly that the numbers were intended to show a party share of vote on election day.

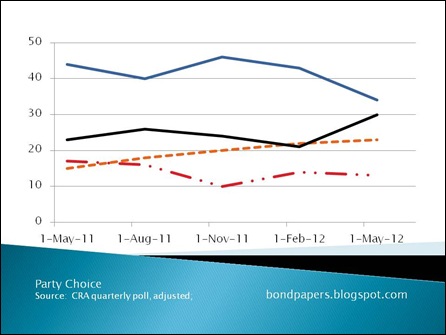

Some pollsters, such as CRA, report results that discard all undecided responses and treat the specific party choices as if they were 100% of the responses.

The Telegram story on October 6 included figures reported that way at the end of the front page story:

Among decided voters across all regions, 59 per cent of them said they would vote PC, 25 per cent NDP and 16 per cent Liberal.

It also included the results of a proving question for the undecideds/refused (26-27%) of respondents.

Among the undecideds or those who refused to state their preference, 26 per cent are leaning towards the PCs, while 21 per cent are leaning towards the NDP and 14 towards the Liberals.

Some 38 per cent said they don’t know.

The Telegram did not report its own results for decided + leaners

Based strictly on the limited information provided in the news stories and/or news releases during the campaign, it is impossible to say for certain what the pollsters intended to measure. As such it becomes very difficult to compare the polls – as reported – for accuracy and consistency.

Take, for example, the CRA report for the Telegram. According to the newspaper account, they have three separate potential sets of figures in response to the single party choice question. There is the raw percentages reported on the front page of the Thursday edition (PC = 44%, for example) Then there is the decideds-only reported in the third last paragraph of the story. (PC= 59%)

And then there would be the possible decided plus leaning response. To figure this one out, you’d have to do some math to calculate what 26% of 26% is. That’s the number of leaners within the undecided/don’t know/refused from the first set of percentages. Do the math, though and you’d get 44% + 7% = 51%.

But what are these numbers supposed to represent?

There’s the rub.

Based on information SRBP obtained several years ago, it appears that none of the polling firms screens respondents to exclude non-voters. They do not identify voters, specifically. Pollsters in Newfoundland and Labrador simply poll a sample of those eligible to vote.

That means that the correct comparison for their polling numbers is not the share of people who show up and vote on polling day but a comparison with the entire population of eligible voters.

You can see this in the CRA question reported by the Telegram on October 6. The initial question asked respondents which party the respondent was most likely to vote for. If CRA had screened out non-voters, none of the replies should have been “I won’t vote” . But, in fact, 3% indicated they did not plan to vote.

The same is basically true of all the poll results, to one degree or another. They measure party choice by eligible voters. In the process, they capture - or are supposed to capture - people who are genuinely undecided, people who claim to be undecided and those who will not vote.

All are important in presenting a clear picture of public opinion as it actually was at the time the poll was taken.

Anything else is a distortion.

- srbp -

![CRA Q1-12[4] CRA Q1-12[4]](http://lh6.ggpht.com/-fsybWpxfUd4/T8-Q_BWevVI/AAAAAAAADWM/6iXbY8ETjDA/CRA%252520Q1-12%25255B4%25255D_thumb%25255B3%25255D.jpg?imgmax=800)